AI agents are no longer a research experiment. They are shipping in production, running multi-step tasks autonomously, and changing how teams build software. But picking the right tools is harder than ever — the landscape has exploded, and the wrong choices at the foundation level create technical debt that stings months later.

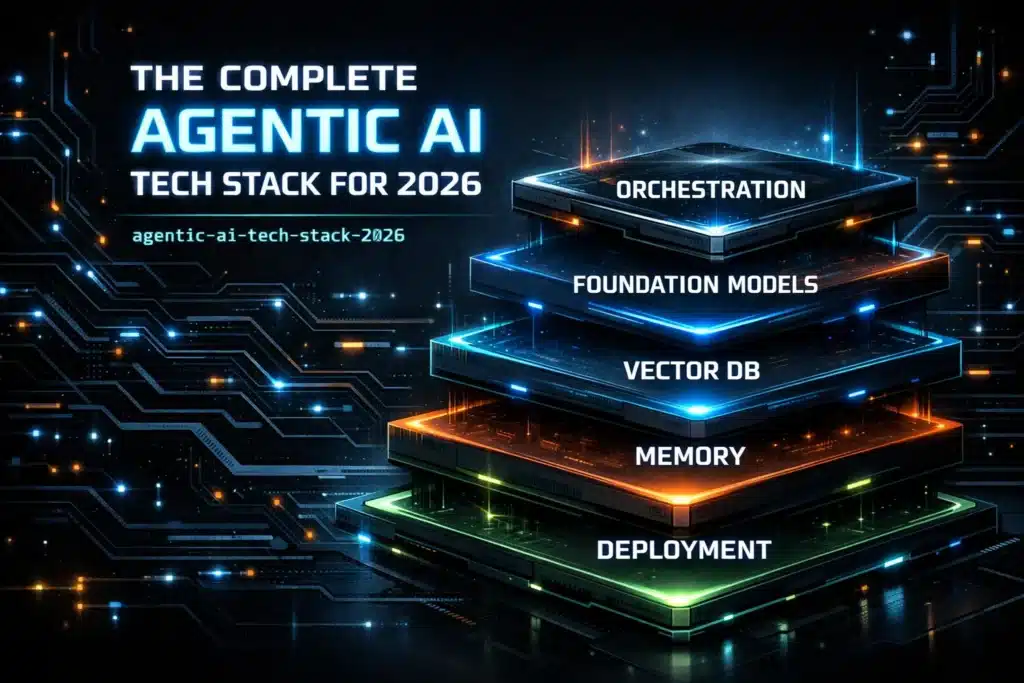

This guide breaks down the complete agentic AI tech stack for 2026 — the actual tools practitioners are using right now, why they matter, and how they fit together. Whether you are just getting started with AI agents or scaling one that already exists, this breakdown of the agentic AI tech stack will give you a clear map of the terrain.

Let us get into it.

Table of Contents

What Is an Agentic AI Tech Stack?

Before diving into specific tools, it is worth being precise about what we mean. An agentic AI tech stack refers to the collection of technologies that power autonomous AI systems — systems that can plan, reason, use tools, call APIs, remember context, and complete multi-step goals with minimal human intervention.

Unlike a simple chatbot pipeline, the agentic AI tech stack spans eight distinct layers:

- Deployment and Infrastructure

- Evaluation and Monitoring

- Foundation Models

- Orchestration Frameworks

- Vector Databases

- Embedding Models

- Data Ingestion and Extraction

- Memory and Context Management

Each layer solves a different problem. Miss one, and your agent either breaks in production, costs a fortune to run, or produces outputs that cannot be trusted. The complete agentic AI tech stack ties all eight together into a coherent, working system.

Also Read : Best AI Agents for Productivity 2026: The Ultimate Guide

1. Deployment and Infrastructure

The deployment layer is where your agentic AI tech stack meets the real world. Agents need low-latency compute, scalable runtimes, and infrastructure that handles spiky, unpredictable workloads — because AI agents do not run like traditional request-response applications.

1.1 Modal — modal.com

Modal is purpose-built for AI workloads. It lets you deploy Python functions as serverless GPU or CPU jobs with zero infrastructure configuration. For agentic workloads that need on-demand compute — like spinning up a sub-agent to run code or process a large document batch — Modal is one of the cleanest solutions in the market. Cold start times are fast, and the pricing model makes sense for bursty AI workflows.

1.2 Docker — docker.com

Docker remains a foundational piece of any production agentic AI tech stack. Containerizing your agent runtime ensures consistent behavior across dev, staging, and production environments. When your agent has custom dependencies, tool wrappers, or local model binaries, Docker makes reproducibility non-negotiable.

1.3 Kubernetes (K8s) — kubernetes.io

When you are orchestrating fleets of agents at scale, Kubernetes handles the scheduling, autoscaling, and fault recovery. K8s is the backbone of enterprise-grade agentic AI tech stack deployments where uptime and horizontal scalability are not optional.

Also Read : ai-tools-for-civil-engineers

1.4 Railway — railway.app

Railway is the simplest way to deploy full-stack agent applications without managing cloud infrastructure directly. It supports Python, Node, Go, and Docker, and its instant deploy workflow makes it extremely popular for teams that want to ship fast without a dedicated DevOps engineer.

1.5 AWS Bedrock — aws.amazon.com/bedrock

AWS Bedrock gives enterprise teams managed access to foundation models alongside the full AWS ecosystem for storage, queuing, and security. If your organization is already AWS-native, Bedrock is the natural home for a production agentic AI tech stack because it handles compliance, IAM, and data residency out of the box.

1.6 Fly.io — fly.io

Fly.io deploys containerized applications close to users across 30+ regions globally. For latency-sensitive agentic applications where tool call round trips need to be fast, Fly.io’s edge deployment model gives you a meaningful performance advantage over single-region setups.

2. Evaluation and Monitoring

You cannot improve what you do not measure. Evaluation is the layer of the agentic AI tech stack that most teams underinvest in early — and regret later. Agents fail in creative, unpredictable ways. You need tooling that captures traces, measures quality, and flags regressions.

2.1 LangSmith — smith.langchain.com

LangSmith is the most widely adopted observability tool for LLM applications. It captures full traces of every agent run — every tool call, every model call, every memory lookup — and lets you replay, annotate, and evaluate them. If you are using LangChain or LangGraph anywhere in your agentic AI tech stack, LangSmith integrates in minutes.

2.2 Weights & Biases (W&B) — wandb.ai

W&B started as an experiment tracking tool for ML training runs, and it has grown into a full observability platform for LLM systems. Its Weave product is specifically designed for tracing and evaluating LLM chains and agents. For teams that are also fine-tuning models, W&B unifies training and inference monitoring in one place.

2.3 Helicone — helicone.ai

Helicone sits as a proxy in front of your LLM API calls and logs every request and response without any code changes. It gives you cost tracking, latency percentiles, error rates, and user-level analytics. For lean teams that want production monitoring without complex instrumentation, it is one of the fastest ways to add visibility to your agentic AI tech stack.

2.4 Arize Phoenix — phoenix.arize.com

Phoenix is an open-source observability tool from Arize AI that runs locally or in the cloud. It is especially strong on LLM evaluation — it ships with built-in evaluators for hallucination detection, relevance scoring, and toxicity, which makes it powerful for teams that need to benchmark agent output quality systematically.

2.5 Braintrust — braintrustdata.com

Braintrust is a developer-first evaluation platform that lets you write evals in code, run them in CI/CD, and track regressions over time. It is built for teams that treat LLM evaluation as an engineering discipline rather than a manual review process. For any serious agentic AI tech stack, automated evals in the deployment pipeline are non-negotiable.

2.6 Langfuse — langfuse.com

Langfuse is an open-source LLM engineering platform with strong tracing, evaluation, and prompt management features. It supports OpenTelemetry, integrates with every major framework, and can be self-hosted — which matters for teams with strict data governance requirements.

3. Foundation Models

The foundation model layer is the brain of your agentic AI tech stack. These are the large language models that reason, plan, generate, and respond. The right model depends on your task type, latency requirements, cost envelope, and whether you need proprietary or open-weight models.

3.1 OpenAI GPT-4o / o3 — openai.com

OpenAI’s models remain the default choice for many production agents due to their instruction-following quality, tool use reliability, and extensive ecosystem support. The o3 reasoning model in particular has raised the bar for complex, multi-step agentic tasks that require deliberate planning. Most agentic AI tech stack implementations at least benchmark against GPT-4o.

3.2 Anthropic Claude — anthropic.com

Claude models are known for their long context windows, careful instruction adherence, and low hallucination rates on structured tasks. Claude’s extended thinking capability makes it particularly well-suited for agentic workflows that require step-by-step reasoning before acting. Claude is increasingly the model of choice in enterprise agentic AI tech stack deployments where accuracy matters more than raw speed.

3.3 Google Gemini — deepmind.google/technologies/gemini

Gemini’s native multimodality — handling text, image, audio, and video in a single model — makes it uniquely powerful for agents that work across multiple data types. Gemini 2.0 introduced real-time audio and video streaming capabilities, opening up new categories of agentic applications that were not previously practical.

3.4 Meta Llama — llama.meta.com

Llama is the open-weight foundation of much of the AI agent ecosystem. Teams that need full control over their model — for fine-tuning, on-premise deployment, or cost optimization — build on Llama. The Llama 3 family in particular delivers competitive performance at a fraction of the API cost of proprietary models.

3.5 Mistral AI — mistral.ai

Mistral produces efficient, high-quality open-weight models that punch above their weight class. Mistral Large and Mistral Small offer strong reasoning at lower costs than GPT-4 class models, and Mistral’s API provides a lean, fast alternative for latency-sensitive parts of your agentic AI tech stack.

3.6 Cohere Command — cohere.com

Cohere’s Command models are optimized for enterprise use cases — retrieval-augmented generation, grounding responses in documents, and tool use at scale. Cohere is a strong choice for enterprise agentic AI tech stack implementations where data privacy, on-premise deployment, and domain-specific fine-tuning are requirements.

4. Orchestration Frameworks

Orchestration is the connective tissue of the agentic AI tech stack. It is the layer that defines how your agent plans, routes between tools, manages state, and coordinates with other agents.

4.1 LangChain — langchain.com

LangChain is the most widely adopted framework for building LLM applications. Its massive ecosystem of integrations, tools, and community-built components means you rarely have to write integration code from scratch. For teams building their first agentic AI tech stack, LangChain provides the fastest path to a working prototype.

4.2 LangGraph — langchain.com/langgraph

LangGraph extends LangChain with a stateful, graph-based approach to agent orchestration. It treats agents as nodes in a directed graph, with edges representing transitions between states. This model is far better suited to complex, multi-step agents than simple chain-based approaches, making LangGraph the production-grade choice for serious agentic AI tech stack builds.

4.3 CrewAI — crewai.com

CrewAI is a framework specifically designed for multi-agent systems — teams of AI agents that collaborate to accomplish a goal. It introduces the concept of roles, goals, and backstories for individual agents, and coordinates their work through a crew structure. For applications where different agents handle different specializations, CrewAI is one of the most intuitive frameworks available.

4.4 Microsoft AutoGen — microsoft.github.io/autogen

AutoGen is Microsoft’s framework for building multi-agent conversational systems. It supports complex patterns like agent-to-agent dialogue, human-in-the-loop interrupts, and code execution in sandboxed environments. AutoGen is particularly well-matched to enterprise use cases in the Microsoft ecosystem.

4.5 LlamaIndex — llamaindex.ai

LlamaIndex is the leading framework for RAG pipelines and data-centric agentic applications. While it started as a data indexing tool, it has grown into a full agent orchestration framework with strong support for tool use, multi-step retrieval, and structured outputs. It is indispensable in any agentic AI tech stack that handles document-heavy workloads.

4.6 Haystack — haystack.deepset.ai

Haystack by deepset is a production-ready AI orchestration framework with a pipeline-first design philosophy. It handles complex, branching pipelines elegantly and ships with built-in support for hybrid retrieval, re-ranking, and structured outputs. For enterprise search and knowledge management agents, Haystack is a battle-tested choice.

5. Vector Databases

Every agentic AI tech stack that handles retrieval-augmented generation or long-term memory needs a vector database. These are purpose-built for storing and querying high-dimensional embeddings at scale.

5.1 Pinecone — pinecone.io

Pinecone is the go-to managed vector database for teams that want to get to production quickly. It handles indexing, scaling, and availability automatically, and its performance at large scale (billions of vectors) is well-documented. If you want a zero-ops vector layer in your agentic AI tech stack, Pinecone is the default.

5.2 Weaviate — weaviate.io

Weaviate is an open-source vector database that supports hybrid search (combining vector similarity with BM25 keyword search) natively. Its GraphQL API and module ecosystem make it highly extensible. For teams that need flexibility and want to avoid vendor lock-in, Weaviate is one of the strongest options.

5.3 Qdrant — qdrant.tech

Qdrant is a Rust-based vector search engine known for exceptional performance and low memory footprint. It supports filtering, payload indexing, and sparse vectors, making it well-suited for production agentic AI tech stack deployments where performance and cost efficiency at scale matter.

5.4 Chroma — trychroma.com

Chroma is the developer-friendly, open-source vector database that has become the default choice for prototyping and smaller-scale production deployments. It runs embedded or as a server, requires no infrastructure setup, and integrates natively with LangChain and LlamaIndex. Most developers encounter Chroma first when learning to build on the agentic AI tech stack.

5.5 Milvus — milvus.io

Milvus is an open-source vector database designed for enterprise-grade, billion-scale deployments. It supports GPU acceleration, distributed architectures, and multiple index types. For organizations with very large corpora and strict data control requirements, Milvus is one of the most capable options in the stack.

5.6 pgvector — github.com/pgvector/pgvector

pgvector is a PostgreSQL extension that adds vector similarity search to a database your team probably already runs. For simpler agentic AI tech stack implementations — or for teams that want to avoid introducing a new database — pgvector is an elegant solution that keeps the stack lean and the operational overhead low.

6. Embedding Models

Embedding models convert raw text (or other data) into the numerical vectors that your vector database stores and queries. The quality of your embeddings directly determines the quality of your retrieval, which in turn determines the quality of your agent’s responses.

6.1 OpenAI text-embedding-3 — openai.com

OpenAI’s text-embedding-3-small and text-embedding-3-large are the most commonly used embedding models in production agentic AI tech stack deployments. They offer strong multilingual performance, predictable latency, and tight integration with the rest of the OpenAI API. text-embedding-3-small in particular delivers excellent cost-per-token efficiency.

6.2 Cohere Embed v3 — cohere.com

Cohere Embed v3 is among the highest-performing embedding models on standard benchmarks, with native support for different embedding types (search query vs. document). Its support for 100+ languages and its strong performance on domain-specific content makes it a strong choice for enterprise applications.

6.3 Voyage AI — voyageai.com

Voyage AI produces domain-specific embedding models trained for code, finance, law, and medical content. When your agentic AI tech stack handles specialized knowledge, a domain-tuned embedding model can deliver significantly better retrieval quality than a general-purpose alternative.

6.4 Nomic Embed — nomic.ai

Nomic Embed is a fully open-source, high-performance embedding model that can run locally with no API dependency. It supports very long context windows (up to 8192 tokens), which is critical for embedding entire documents rather than chunks. For privacy-sensitive deployments, Nomic is one of the best open-source choices.

6.5 Sentence Transformers — sbert.net

The Sentence Transformers library from Hugging Face is the foundation of most self-hosted embedding pipelines. It provides access to hundreds of pretrained models and makes fine-tuning your own domain-specific embeddings straightforward. For teams that need full control over the embedding layer of their agentic AI tech stack, SBERT is the starting point.

6.6 BGE (BAAI General Embedding) — huggingface.co/BAAI

The BGE model family from the Beijing Academy of AI consistently ranks at the top of the MTEB leaderboard (Massive Text Embedding Benchmark). BGE-M3 in particular supports multilingual embeddings and both dense and sparse retrieval, making it one of the most versatile open-weight embedding options available.

7. Data Ingestion and Extraction

Agents need data. Before a single query hits your retrieval system, raw documents — PDFs, emails, web pages, databases — need to be parsed, cleaned, chunked, and indexed. This is the data ingestion layer of the agentic AI tech stack, and getting it right is what separates agents that hallucinate from agents that actually know things.

7.1 Unstructured.io — unstructured.io

Unstructured is the most comprehensive open-source library for document parsing. It handles PDFs, Word files, HTML, Markdown, images with OCR, and dozens of other formats. Its output is normalized, clean text that your chunking and embedding pipeline can process directly. For any production agentic AI tech stack, Unstructured is a foundational piece.

7.2 LlamaParse — llamaindex.ai/llamaparse

LlamaParse is LlamaIndex’s managed document parsing service, optimized specifically for converting complex PDFs (tables, charts, multi-column layouts) into LLM-ready Markdown. When standard parsing produces messy output that degrades retrieval quality, LlamaParse is typically the fix.

7.3 Firecrawl — firecrawl.dev

Firecrawl turns any website into clean, structured Markdown optimized for LLM ingestion. It handles JavaScript-rendered pages, sitemaps, and full-site crawls. For agents that need to stay current with web-based knowledge sources, Firecrawl is the cleanest ingestion solution available.

7.4 Airbyte — airbyte.com

Airbyte is an open-source data integration platform with 300+ connectors to databases, SaaS tools, and APIs. For agentic AI tech stack implementations that need to sync structured data from business systems (CRMs, ERPs, analytics platforms) into a retrieval pipeline, Airbyte handles the ETL layer reliably.

7.5 Apache Airflow — airflow.apache.org

Airflow is the standard workflow orchestration tool for data pipelines. When your ingestion pipeline needs to run on a schedule — re-indexing documents nightly, syncing new data from sources, or running batch embedding jobs — Airflow provides the scheduling, monitoring, and retry logic that production pipelines require.

7.6 Docling — github.com/DS4SD/docling

Docling is an open-source document conversion library developed by IBM Research. It excels at extracting structured content from PDFs and Office documents, preserving layout, tables, and reading order. For enterprise agentic AI tech stack deployments where document fidelity matters, Docling is a powerful and underrated tool.

8. Memory and Context Management

Memory is what separates a stateless chatbot from a genuinely useful AI agent. The memory layer of the agentic AI tech stack handles how agents store, retrieve, and reason over information across time — both within a single session and across many conversations.

8.1 Mem0 — mem0.ai

Mem0 (formerly EmbedChain) is a purpose-built memory layer for AI agents. It extracts facts, preferences, and context from conversations and stores them as structured memories that persist across sessions. Mem0 supports multiple storage backends and integrates with every major LLM. For personalized, stateful agents, it is the most plug-and-play memory solution in the agentic AI tech stack today.

8.2 Zep — getzep.com

Zep is a memory store designed specifically for AI assistants. It maintains a temporal knowledge graph of conversations, automatically summarizes long histories, and surfaces relevant past context when needed. Zep’s temporal modeling makes it particularly strong for agents that need to reason about the sequence and recency of past interactions.

8.3 Letta (formerly MemGPT) — letta.ai

Letta is a framework for building stateful AI agents with a memory management system inspired by OS memory hierarchies — in-context, archival, and recall storage work together to give agents effectively unlimited, queryable memory. Letta is one of the most technically sophisticated memory solutions in the agentic AI tech stack ecosystem.

8.4 Redis — redis.io

Redis is used throughout production agentic AI tech stack deployments as a fast, in-memory cache for short-term context, session state, and ephemeral agent working memory. Redis Stack adds vector search capabilities, making it possible to use a single Redis instance for both caching and semantic memory retrieval.

8.5 Cognee — cognee.ai

Cognee is an open-source memory and knowledge graph framework that builds structured representations of information from unstructured text. It extracts entities and relationships, builds knowledge graphs, and enables agents to reason over connected information rather than flat vector search results — a meaningful qualitative leap in memory capability.

8.6 LangMem — langchain.com

LangMem is LangChain’s native memory abstraction layer, providing standard interfaces for conversation buffers, summary memory, entity memory, and vector store memory. For teams already building on LangChain, LangMem provides the simplest path to adding stateful memory to an existing agentic AI tech stack.

Comparison Table: Agentic AI Tech Stack Tools at a Glance

| Category | Tool | Open Source | Managed Cloud | Self-Hostable | Best For |

|---|---|---|---|---|---|

| Deployment | Modal | No | Yes | No | Serverless AI compute |

| Deployment | Docker | Yes | No | Yes | Containerized runtime |

| Deployment | AWS Bedrock | No | Yes | No | Enterprise cloud-native |

| Monitoring | LangSmith | Partial | Yes | Yes | LangChain tracing |

| Monitoring | Helicone | Yes | Yes | Yes | Zero-code LLM logging |

| Monitoring | Langfuse | Yes | Yes | Yes | Open-source observability |

| Foundation Model | Claude | No | Yes | No | Long context, accuracy |

| Foundation Model | Llama 3 | Yes | No | Yes | Cost-efficient open weight |

| Foundation Model | Gemini | No | Yes | No | Multimodal agents |

| Orchestration | LangGraph | Yes | Partial | Yes | Stateful multi-step agents |

| Orchestration | CrewAI | Yes | No | Yes | Multi-agent teams |

| Orchestration | AutoGen | Yes | No | Yes | Conversational multi-agent |

| Vector DB | Pinecone | No | Yes | No | Managed production scale |

| Vector DB | Qdrant | Yes | Yes | Yes | Performance-critical search |

| Vector DB | pgvector | Yes | No | Yes | Lean Postgres-native setup |

| Embedding | Voyage AI | No | Yes | No | Domain-specific embeddings |

| Embedding | BGE | Yes | No | Yes | Top MTEB benchmark scores |

| Embedding | Nomic Embed | Yes | No | Yes | Long context, local use |

| Ingestion | Unstructured | Yes | Yes | Yes | Multi-format document parsing |

| Ingestion | Firecrawl | Yes | Yes | Yes | Web content to Markdown |

| Ingestion | Docling | Yes | No | Yes | PDF/Office fidelity |

| Memory | Mem0 | Yes | Yes | Yes | Persistent cross-session memory |

| Memory | Zep | Yes | Yes | Yes | Temporal conversation memory |

| Memory | Letta | Yes | No | Yes | OS-inspired hierarchical memory |

How These Layers Fit Together

Here is a practical picture of how a production agentic AI tech stack flows end-to-end:

Raw data enters through the ingestion layer (Unstructured, Firecrawl, Docling), gets embedded by an embedding model (Voyage AI, BGE, text-embedding-3), and lands in a vector database (Pinecone, Qdrant, Weaviate). When a user interacts with the agent, the orchestration framework (LangGraph, CrewAI) coordinates reasoning, retrieves relevant context, checks memory (Mem0, Zep) for prior interactions, and calls a foundation model (Claude, GPT-4o, Llama) to generate a response. The whole thing runs on infrastructure (Modal, Docker, Kubernetes) and is observed through an evaluation and monitoring layer (LangSmith, Langfuse, W&B).

That is the complete agentic AI tech stack as it exists in 2026.

Check Out : How to Build POWERFUL AI Agents in 2026: The Only Guide You’ll Need (Tools, Frameworks & Roadmap)

Frequently Asked Questions

1. What is an agentic AI tech stack? An agentic AI tech stack is the full set of technologies required to build, deploy, and operate autonomous AI agents — systems that can plan, reason, use tools, and complete multi-step tasks with minimal human input. It spans infrastructure, models, orchestration, memory, retrieval, and monitoring.

2. How is the agentic AI tech stack different from a regular LLM stack? A standard LLM stack handles a single turn: user sends a message, model responds. The agentic AI tech stack adds memory across sessions, tool use, multi-step planning, multiple coordinated agents, and the evaluation infrastructure needed to ensure agents behave reliably over time.

3. Which orchestration framework should I start with for the agentic AI tech stack? LangChain and LangGraph are the most accessible starting points. LangGraph is particularly recommended for any workflow with conditional logic, loops, or state that needs to persist between agent steps.

4. Do I need a vector database in my agentic AI tech stack? If your agent needs to retrieve information from any document corpus or maintain semantic memory, yes. pgvector is the lightest-weight entry point. Pinecone and Qdrant are better choices for production scale.

5. What is the most cost-efficient foundation model for the agentic AI tech stack? Meta Llama 3 and Mistral Small offer the best performance-to-cost ratio among openly available models. For API-based usage, Claude Haiku and GPT-4o-mini are the most cost-efficient options from the major providers.

6. How do I add memory to my AI agent? Mem0 and Zep are the fastest paths to persistent memory. For teams using LangChain, LangMem provides native memory abstractions. For more sophisticated, graph-based memory, Cognee and Letta offer richer but more complex solutions.

7. What monitoring tools are essential for the agentic AI tech stack? At minimum, every production agent deployment needs trace logging (LangSmith or Langfuse), cost tracking (Helicone), and some form of automated evaluation (Braintrust or Phoenix). Running agents without observability is the most common cause of undetected regressions.

8. Can I self-host my entire agentic AI tech stack? Yes, with some trade-offs. Llama for models, Weaviate or Milvus for vector search, LangGraph for orchestration, Unstructured for ingestion, Langfuse for monitoring, and Letta for memory — all can be self-hosted. The main costs are operational complexity and the engineering time required to maintain each layer.

9. What is the best embedding model for an agentic AI tech stack? It depends on your use case. For general English content, OpenAI text-embedding-3-small is the practical default. For domain-specific applications, Voyage AI’s specialized models often outperform general models by a meaningful margin. For fully self-hosted pipelines, BGE-M3 is the state of the art.

10. How do I evaluate the quality of my agentic AI tech stack? Define task-specific evaluation criteria first — accuracy, tool use correctness, hallucination rate, latency, cost-per-task. Then use Braintrust, Phoenix, or W&B Weave to run automated evals on a representative dataset. Build evals into your CI/CD pipeline so regressions surface before they reach users.

Building the right agentic AI tech stack is an iterative process. Start with the layers that matter most for your specific use case, get something working in production, and add complexity where it genuinely solves a problem. The best agentic AI tech stack is the one your team can actually ship, maintain, and improve over time.

2 thoughts on “The Complete Powerful Agentic AI Tech Stack Every Developer Must Know in 2026”